Is AI Homework Help Cheating? What Parents Need to Know

March 4, 2026

Most teenagers are using AI for schoolwork and over three quarters say that their classmates use it to cheat at least sometimes. Over the past two years, this has gone from a niche concern of only the most tech-forward parents and educators to an everyday occurrence. Parents can no longer just ignore AI in child’s academics. As a data scientist, dad, and technologist, I am fascinated and concerned about the impact on our children’s education. Let’s break down the numbers.

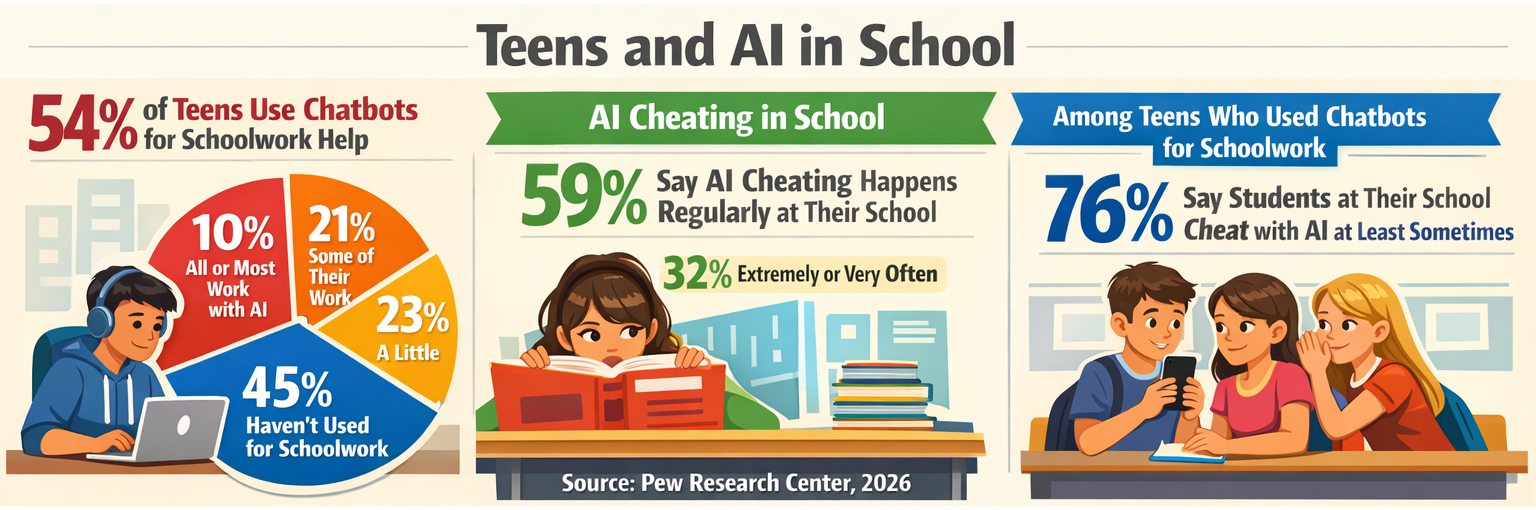

54% of teens use chatbots for some schoolwork help. Broken down: 10% do all or most schoolwork with AI, 21% do some, 23% do a little. Only 45% haven’t used them for school at all (Pew Research Center).

Is it right or wrong? Not all use of AI is cheating, but the students seem to think, by-and-large, that it is used to cheat, though.

59% of teens think using AI to cheat is a regular occurrence at their school; about a third say it happens extremely or very often. Among teens who’ve used chatbots for schoolwork, 76% say students at their school cheat with AI at least sometimes (Pew Research Center).

And AI use isn’t limited to schoolwork… here’s what else your kid is likely doing with it.

With the data obviously pointing at the problem, educators must act.

What are schools doing?

There are some tools available - plagiarism detection being a prominent place for implementation. While the tools themselves promote sub-1% false positive rates, a Washington Post investigation found that number as high as 50% in testing (though with a smaller sample size). This is even more daunting for neurodivergent and non-native English speakers.

This only covers (if we can call it that) writing. There are yet to be detection tools created for other subjects.

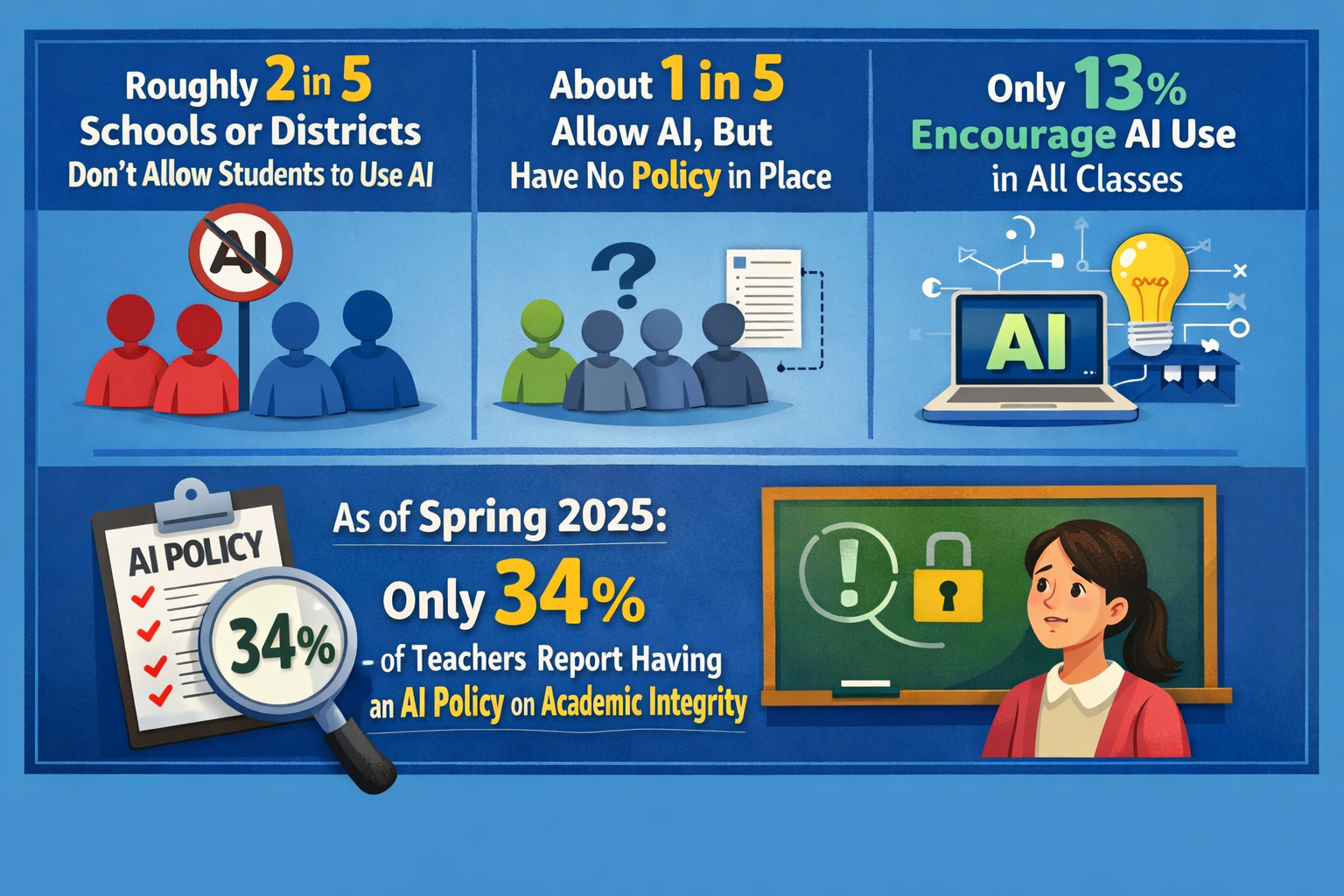

So if there are no tools available, can schools implement strong policy to prevent cheating and maintain scholastic excellence? Roughly 2 in 5 schools or districts don’t allow students to use AI at all. About 1 in 5 allow it but have no policy in place. Only 13% actively encourage AI use across all classes (Collegeboard). As of spring 2025, only 34% of teachers reported having any school or district policy on AI related to academic integrity specifically (RAND).

Needless to say, it is a fluid situation. Things are moving quickly and tools and policies are unable to keep up. I disagree with banning AI - it is a powerful tool. But we shouldn’t allow our students to stop thinking and defer to AI to do their thinking for them.

Want to know what your child is actually asking AI? MyDD.ai shows you in real time. Try it free for 14 days.

Not all AI use is cheating

There are a number of uses where I think AI is extremely beneficial. You can give your student, or your student can create for themselves, custom help to get them through topics they are struggling with.

Your child doesn’t understand fractions as well as his classmates? Have the AI come up with extra questions based on his current level of understanding.

Your child is thinking of topics for the next science fair project? Have her go back-and-forth with an AI chatbot to brainstorm ideas.

They just finished a draft of an essay? Let AI verify their grammar, spelling, and flow to propose improvements and updates (that’s what I did with this very post).

The difference with this is that the student is still forced to think through the subject themselves. By writing to an AI, it forces them to explain what they don’t understand, further enhancing their understanding. This isn’t a shortcut - it is critical discourse. This is different from copying and pasting a homework question into ChatGPT and getting back an answer.

If it is good for kids, why are they cheating?

As it has been since biblical times, I think “there is nothing new under the sun.” Kids have cheated in the past and they are cheating now - AI is just a new avenue available for this to occur. Instead of asking another student to write the paper, they just ask ChatGPT.

To be fair to the students, though, they are using AI mostly because no one told them they couldn’t. That, and the pressures they are facing to do well lead them to use tools that enhance their scholarly ability, even if it isn’t above board.

It also leaves parents in an awkward position. They, too, don’t necessarily know what is right or wrong here. So what to do? Parents need to talk to their children about how they are using AI. Lead with curiosity, not accusation. Discuss with your child the real-world ramifications of using AI and how it may impact their critical thinking and foundational job skills.

If you need more help with this conversation, check out our post on having the AI talk.

MyDD.ai’s approach

Our platform helps from both sides of the equation. For students, it gives them age-appropriate guardrails and will guide them through their academics using the Socratic method. For parents and educators, you don’t have to guess at what your child and students are using AI for - you can see it clearly on the dashboard, weekly summaries, and real-time alerts.

For a full comparison of what’s different compared to ChatGPT, see our head-to-head breakdown.